Reference Resource

A curated companion reference to the most significant external research, frameworks, and guidance informing ThinkCapital's work on AI governance for government.

About This Resource: This Body of Knowledge is a companion to ThinkCapital's research program — not a substitute for the primary sources. We curate and contextualize the work of NIST, OMB, and peer researchers here to help practitioners navigate the growing landscape of AI governance frameworks. Graphics, threshold models, and maturity level diagrams referenced in our research are hosted in the sections below.

National Institute of Standards and Technology

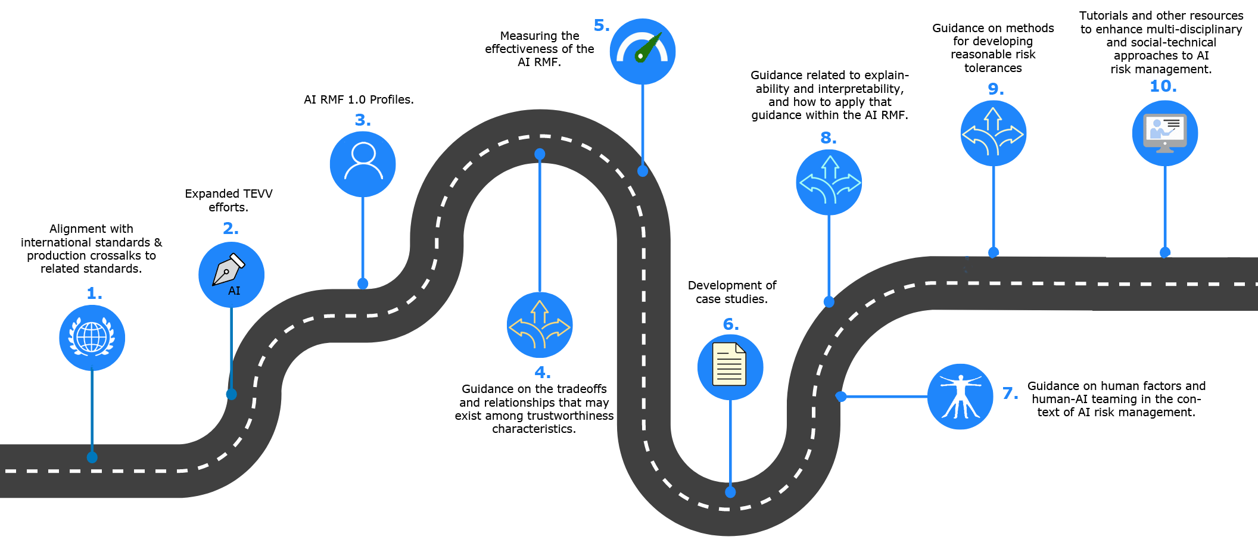

The NIST AI RMF, released in January 2023, provides the foundational voluntary framework for AI risk management across all sectors — with particular applicability to government contexts. Its four core functions — GOVERN, MAP, MEASURE, and MANAGE — provide the structural backbone for ThinkCapital's AI governance assessment methodology.

ThinkCapital perspective: The RMF correctly identifies measurement as a core function, but implementation guidance on how to operationalize the MEASURE function in government contexts remains underdeveloped. This is a primary focus of our research.

The NIST AI RMF Playbook provides suggested actions and indicative outcomes for each subcategory of the RMF. For government agencies moving from framework adoption to operational implementation, the Playbook is the primary implementation reference.

A critical companion document for agencies deploying AI in high-stakes decision contexts. NIST SP 1270 addresses the sociotechnical challenge of bias in AI systems, providing a taxonomy of bias types and approaches to measurement and mitigation.

Office of Management and Budget

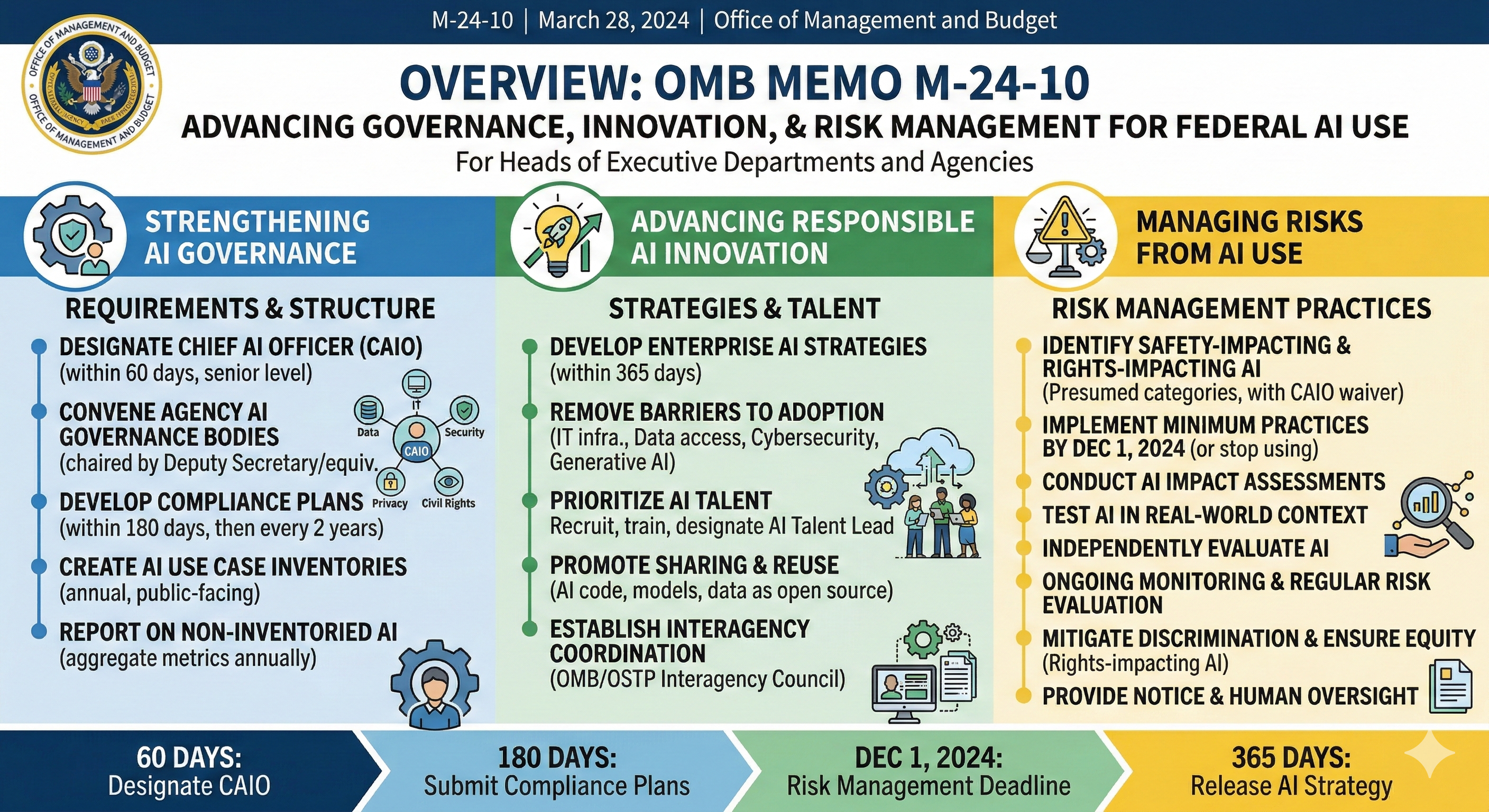

OMB Memorandum M-24-10 (March 2024) establishes binding requirements for federal agencies on AI governance, including the designation of Chief AI Officers, minimum risk management practices, and requirements for rights-impacting and safety-impacting AI systems. This memo frames the compliance context within which most federal AI governance work occurs.

ThinkCapital perspective: M-24-10's requirements for minimum risk practices are a floor, not a ceiling. The meaningful question for agencies is how to build measurement and oversight capabilities that genuinely fulfill the intent — not merely satisfy checkbox compliance.

The earlier OMB guidance establishing the regulatory framework for AI, including 10 principles for AI regulation. Provides important context for understanding the evolution of federal AI policy toward M-24-10.

Maturity Models

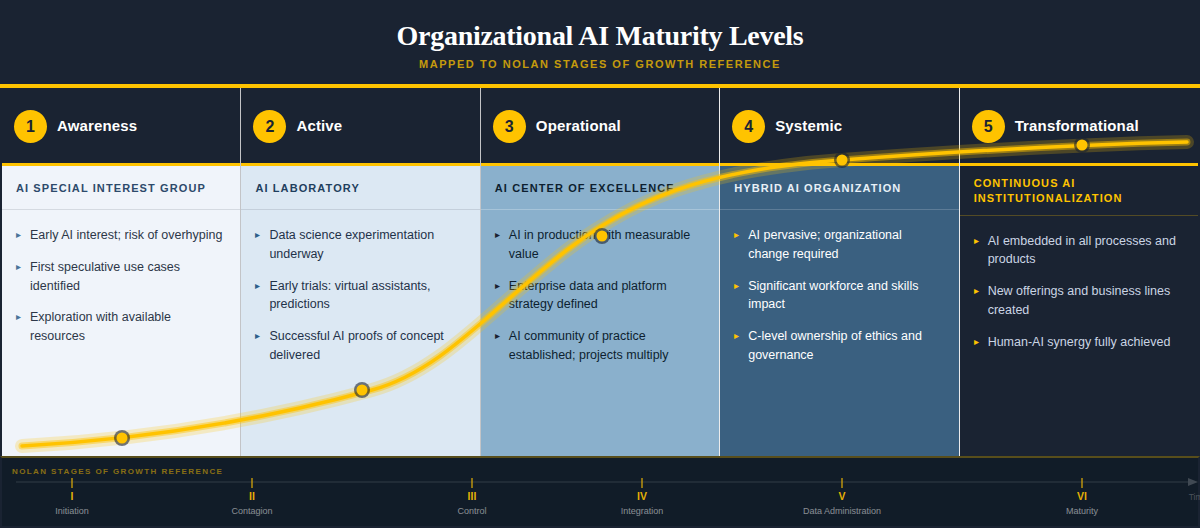

Multiple researchers and institutions have developed AI maturity models — staged frameworks describing the progression from nascent AI capability to advanced, governed, optimizing AI programs. This section collects the most relevant models with ThinkCapital's comparative annotations.

The NIST AI RMF supports maturity profiling through its "Profiles" mechanism. Current-state and target-state profiles together define an agency's maturity gap and improvement roadmap. This approach aligns AI maturity assessment directly with risk management practice.

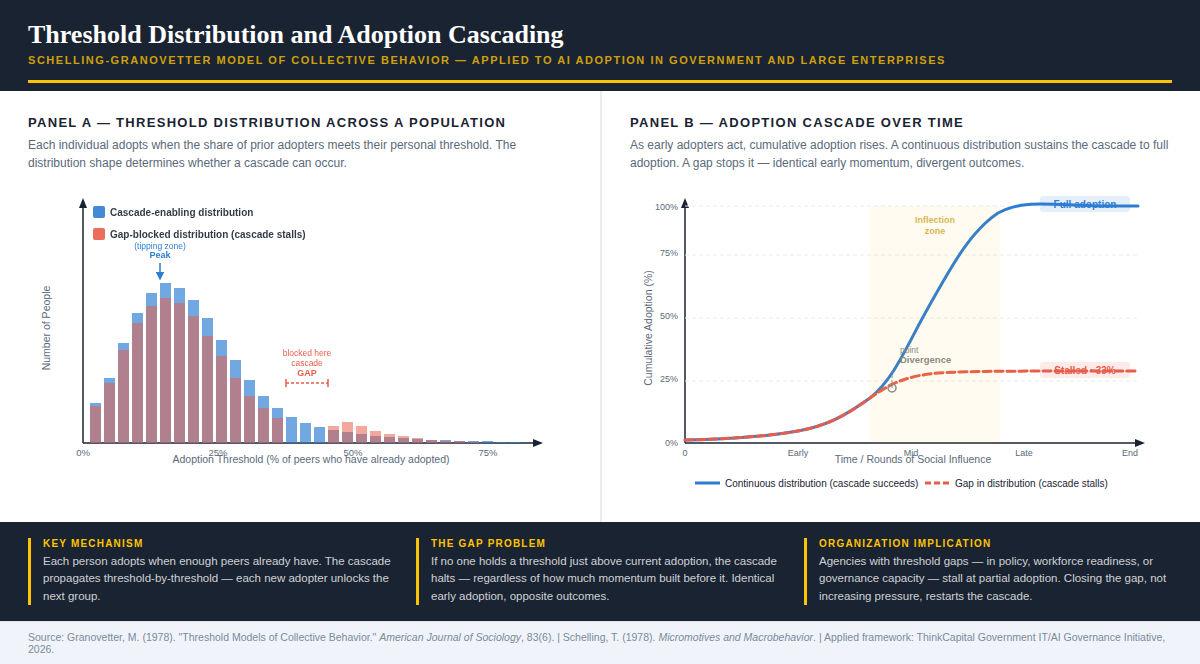

Adoption Threshold Research

Thomas Schelling's work on segregation dynamics and Mark Granovetter's threshold model of collective behavior provide the theoretical foundation for understanding why AI adoption sometimes accelerates past a tipping point and why it sometimes never does, even when individual attitudes are favorable. Each person in an organization adopts when the share of prior adopters meets their personal threshold. It is the distribution of those thresholds (as opposed to the average attitude) that drives whether adoption cascades to scale or stalls at partial penetration.

Panel A — Threshold Distribution: When thresholds are continuously distributed (blue), each wave of adopters unlocks the next group, producing a self-sustaining cascade. When a gap exists in the distribution, the cascade halts regardless of how much early momentum has built. Two organizations with identical early adoption rates can reach completely opposite outcomes depending solely on whether their threshold distribution is continuous or contains a gap.

Panel B — Adoption Cascade Over Time: The cascade dynamics play out as an S-curve when the distribution is continuous, reaching full adoption through the inflection zone. The gap-blocked organization tracks the same curve through early and mid stages, then diverges sharply. It plateaus permanently at roughly one-third adoption. This explains a common organizational AI pattern: strong pilot performance, enthusiastic early adopters, and visible executive support, followed by enterprise-wide stagnation that pressure and communication campaigns cannot break.

ThinkCapital application of this theory: We map organizational stakeholders along a threshold distribution and identify the minimum viable coalition needed to initiate an adoption cascade. Client organizations utilize our policy, governance, and workforce interventions to close the critical gaps for the resistant middle. The goal is not to convince skeptics but to restructure the conditions under which adoption becomes individually rational for the next threshold group.

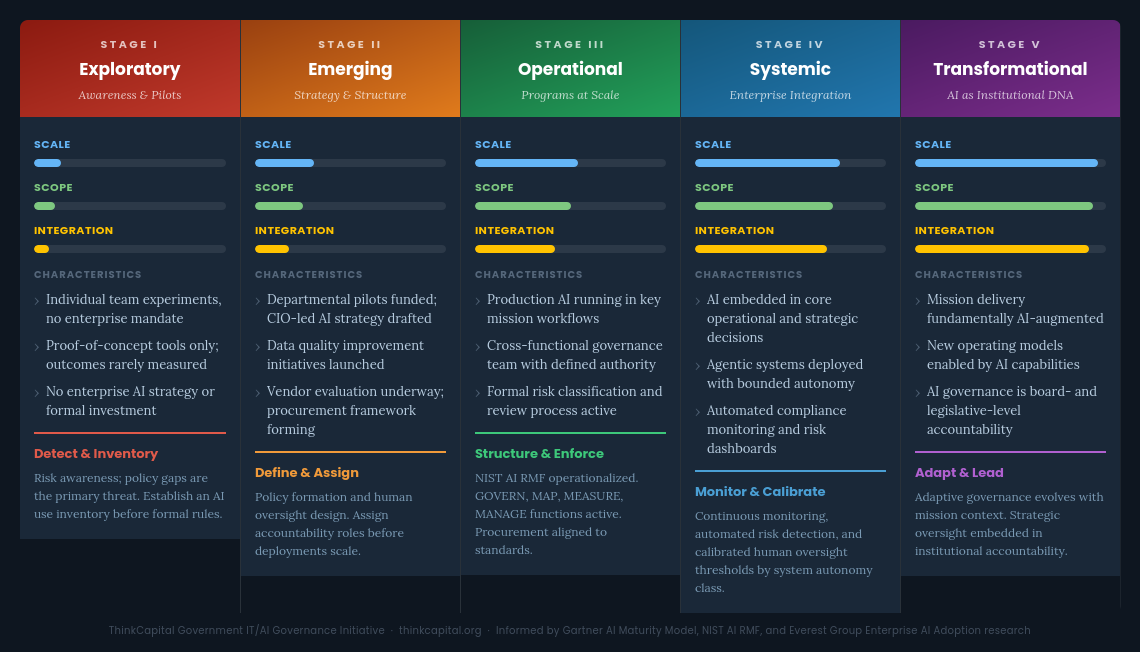

AI Growth Stages

Distinct from maturity models — which describe governance capability — AI growth stage models describe the evolution of an agency's AI program in terms of scale, scope, and organizational integration. Most published AI maturity models conflate two different questions: how sophisticated is the technology, and how deeply has it been embedded into organizational operations? Separating these dimensions clarifies what governance strategy is actually required at each point.

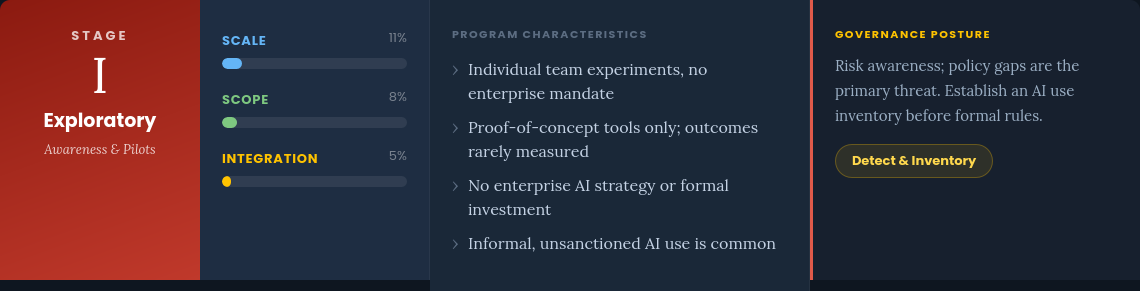

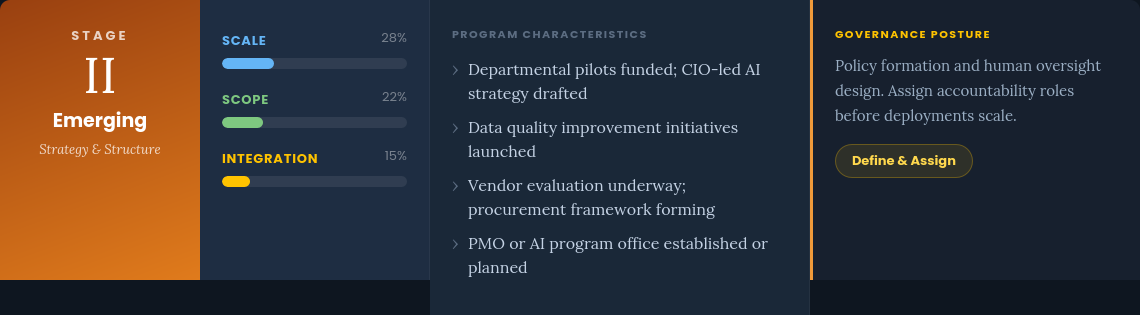

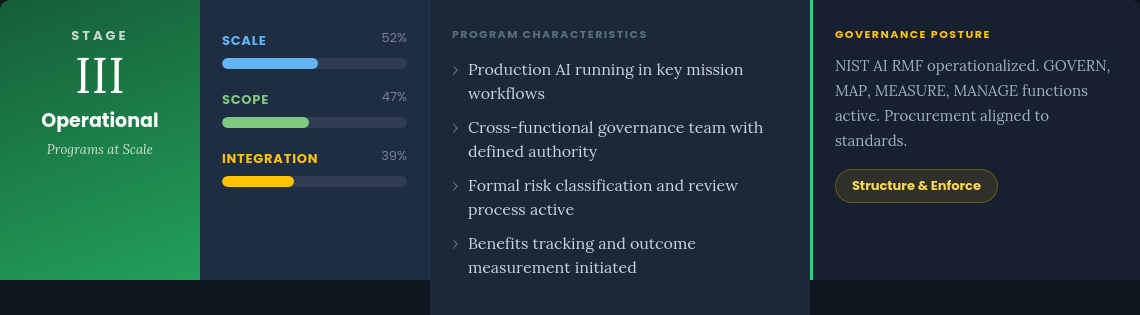

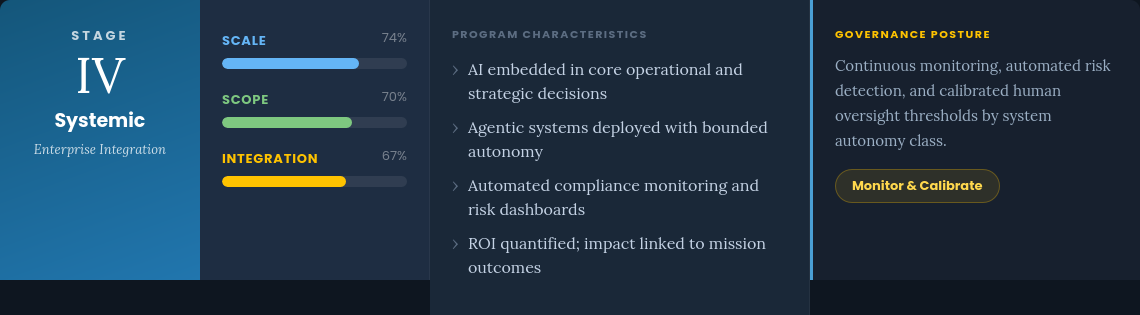

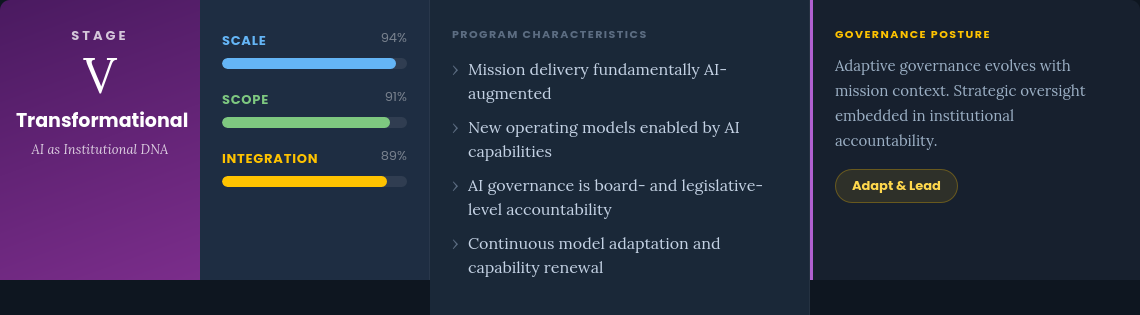

The framework proposes five stages, from initial experimentation through transformational integration. At each stage, the governance imperative shifts — early-stage programs need visibility and accountability assignment; mid-stage programs need structural frameworks and measurement; late-stage programs need continuous monitoring and adaptive oversight.

Detailed views of each stage follow below.

Figures 2–6. Dimension bars show illustrative relative positions, not empirical measurements. Model synthesis informed by Gartner AI Maturity Model (2024), NIST AI RMF (2023), and Everest Group Enterprise AI Adoption research. Source: ThinkCapital Government IT/AI Governance Initiative, 2026.

Three dimensions, not one. Most maturity models collapse program development into a single scale, obscuring the distinction between an agency running many small pilots (high scale, low integration) and one that has deeply embedded a single system into core decisions (low scale, high integration). These configurations carry different governance risks and demand different oversight architectures.

Governance as a proportional response. The model reflects a core ThinkCapital hypothesis: governance overhead should scale with deployment risk, not with abstract maturity scores. A Stage I program does not need NIST AI RMF operationalization — it needs an inventory. A Stage IV program cannot rely on periodic audits — it needs continuous automated monitoring.

The Stage III inflection point. Operational (Stage III) is where most current federal and large state agency programs are positioned or aspiring to be. This is where NIST AI RMF adoption becomes operationally meaningful rather than purely aspirational. ThinkCapital’s research focuses on what distinguishes durable governance from documentation compliance at this inflection point.

Agentic AI creates a Stage IV forcing function. The emergence of agentic systems compresses the transition from Stage III to Stage IV. Agencies deploying agentic AI without Stage IV governance architecture are operating in a structural oversight gap — the central concern driving ThinkCapital’s second active research stream on meaningful human oversight at operational scale.

This taxonomy is a working hypothesis under active research validation. The framework will be revised as empirical evidence accumulates through the GIAG Initiative structured interview program.

Peer Institutions & Researchers

This section will expand as ThinkCapital's research program develops. The following are foundational references currently informing our work.

GAO's AI Accountability Framework for Federal Agencies and Other Entities (2021) provides a complementary governance lens to the NIST RMF, organized around transparency, explainability, accountability, and fairness. Particularly useful for oversight-committee contexts.

The White House Office of Science and Technology Policy's Blueprint for an AI Bill of Rights (2022) establishes five principles framing government expectations for AI systems affecting the public: safe and effective systems, algorithmic discrimination protections, data privacy, notice and explanation, and human alternatives.